What Else Science Requires of Time (That Philosophers Should Know)

Science appears to have a great many other implications about the nature of time that are not discussed in the main Time article. This article is one of the three supplements to that main article. The other two are Frequently Asked Questions about Time and Special Relativity: Proper Times, Coordinate Systems, and Lorentz Transformations (by Andrew Holster).

Table of Contents

- What are Theories of Physics?

- Relativity Theory

- Quantum Mechanics

- Statistical Predictions

- Quantum Leaps, Quantum Waves, and Duality

- The Copenhagen Interpretation and Complementarity

- Superposition and Schrödinger’s Cat

- Randomness and Indeterminism

- The Measurement Problem and Collapse

- Hidden Variables

- The Many-Worlds Interpretation

- Heisenberg’s Uncertainty Principle

- Virtual Particles, Quantum Foam and Wormholes

- Entanglement

- Decoherence

- Non-Locality

- Objective Collapse Interpretations

- Quantum Tunneling

- Approximate Solutions

- Emergent Time and Quantum Gravity

- Quantum Information

- The Standard Model

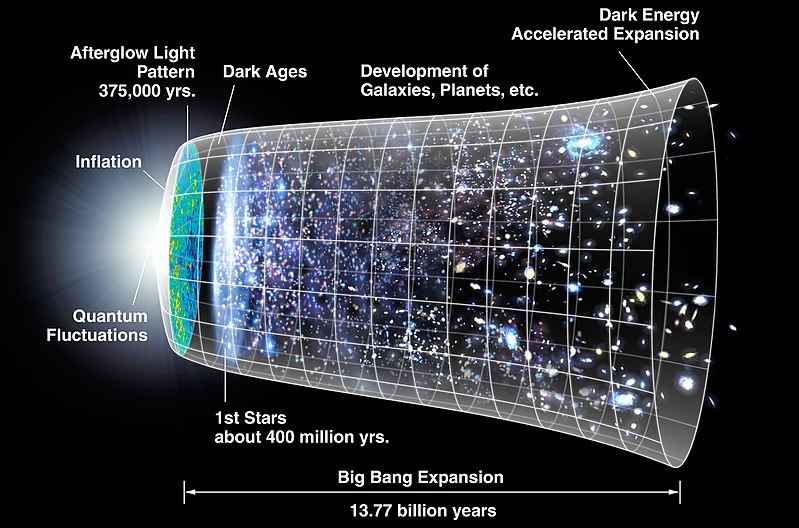

- The Big Bang

- Infinite Time

- Time-Reversibility and Time-Reversal Symmetry

1. What are Theories of Physics?

One of the principal philosophical assumptions in physics is that the world makes sense and we can understand it. The main tool for doing this is a theory. More precisely it is the creation and application of a precise theory that has been observationally or experimentally confirmed to the satisfaction of the relevant experts. Unfortunately, the term theory has many other senses, even in physics. When someone says, “That is just a theory,” they usually mean some explanation or proposal is sketchy and unconfirmed.

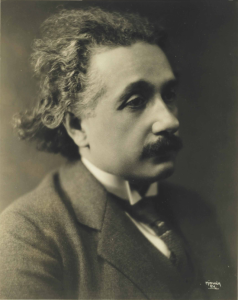

The primary goal of physics is to find a theory in a special, technical sense, not in the sense of an explanation as in the remark, “My theory is that the mouse stole the cheese,” nor in the sense of a prediction as in the remark, “My theory is that the mouse will steal the cheese.” An example of a theory in the intended and more technical sense is the General Theory of Relativity; it has precise, quantitative laws expressed using mathematics; it is well-confirmed; and it is key to explaining a wide variety of phenomena. Philosophers do not agree on why mathematics is so successful in developing theories of nature, but see the article “The Applicability of Mathematics in Physics” for a discussion of this point.

A theory’s laws describe specific, physically possible patterns of events; so, if a law does not allow certain behavior, then (according to the theory) the behavior is not physically possible even though it might be logically possible. The most important laws tend to be the dynamic laws that say of any situation at one time what will happen next, or infinitesimally later.

Ideally the confirmed theories of physics explain what we already know, predict what we don’t, and help us understand what we can. There are other important ideals. We would like the theories to increase our ability to manipulate and control nature for our benefit. We expect each theory to be logically consistent, and we expect our collection of theories either to be mutually consistent or else for there to be understandable reasons why they are not. Also, a theory is more firmly accepted if it can explain a wide variety of phenomena that intuitively would be considered to be unrelated. For example, Newton’s theory of gravity explains why ripe apples fall down from trees and why the Moon moves as it does through the night sky. Before Newton, the two phenomena were assumed to be unrelated, brute facts of nature.

Physicists expect their theories to agree with reality in the sense of accounting for the results of observations and experiments, or, as philosophers say, the theory is expected to be “empirically adequate.” Whether the fundamental theories ideally are more than empirically adequate, whether they are also true or at least approximately true, has caused considerable controversy among philosophers of science. The philosopher Hilary Putnam is noted for arguing that the success of precise theories in physics would be a miracle if they were not at least approximately true. Instrumentalists disagree. They say that all theories of science are merely effective “instruments” designed for explanatory and predictive success. A scientific theory’s claims are neither true nor false. By analogy, a shovel is an effective instrument for digging, but a shovel is neither true nor false.

Some philosophers envision science to be a march toward a single, true, final theory. Others have a very different, more pluralistic vision, that science is a toolbox of frameworks. Each is to some extent useful in different situations, but none is deserving of being called “comprehensive” and “true.”

Physicists hope their theories can have a minimum number of laws and a minimum number of assumptions. For example, they would like avoid having to specify the specific values of numerical constants in their theories, the so-called free parameters that cannot be calculated and must be determined by measurement. An example is the mass of the electron. It has to be measured; it cannot be predicted. That the number of free parameters can be minimized and the number of independent laws can be minimized is only a hope. It is not an a priori truth. Nevertheless, averaging over the history of physics, more and more phenomena are being explained with fewer and fewer laws. This has led to the hope of finding a set of fundamental laws explaining all phenomena at least in principle, one in which it would be clear how the currently accepted fundamental laws of relativity theory and quantum theory and other scientific theories are approximately true. This hope is the hope for a successful theory of quantum gravity. That theory is sometimes called a “theory of everything.” The name is pretentious because having this theory does not automatically yield a cure for cancer or the time when Julius Caeser saw his eighty-fourth fig leaf.

Although there are many exceptions among the non-fundamental laws, our fundamental dynamic laws satisfy the Markov Assumption that the future depends on the present, not on the present plus the past. But it is not known a priori that all dynamic laws should be Markovian. In the social sciences most laws are usually non-Markovian; the subject at time t will do x at time t + 1 depending not just on the state at time t but also on the subject’s financial history, toilet training, and history of being bullied on the school playground. Non-Markovian laws violate locality in the temporal dimension.

Since the time of Newton, the laws created by physicists have placed limitations on how one configuration of the objects in a physical system of objects at a single time is related to another configuration at at a later time. There definitely should be limitations because the universe is not created anew each moment with its old configuration having nothing to do with its new one. The meta-assumption that the best laws are dynamic laws describing the time evolution of a system from an initial time has historically dominated physics. But some philosophers of physics in the 21st century have suggested pursuing other kinds of laws, non-dynamic laws. For example, maybe some or all of the ideal laws would be like the laws of the game Sudoku. Those laws are not dynamic. They only allow you to check whether a completed sequence of moves made in the game is allowable; but for any point in time during the game they do not tell you the next move, as would a dynamic law.

Regarding the term “fundamental law,” if law A and law B can be explained by law C, then law C is considered to be more fundamental than A and B. This claim has two, usually implicit presuppositions: (1) A, B, and C are mutually consistent, and (2) C is not simply equivalent to the conjunction “A and B.” The word “basic” is often used synonymously with “fundamental.”

Here is the opinion of the influential theoretical cosmologist Stephen Hawking about the nature of scientific laws:

I believe that the discovery of these laws has been humankind’s greatest achievement…. The laws of nature are a description of how things actually work in the past, present and future…. But what’s really important is that these physical laws, as well as being unchangeable, are universal [so they apply to everything everywhere all the time].

Some theories are expressed fairly precisely, and some are expressed less precisely. All other things being equal, the more precise the better. If they have important simplifying assumptions but still give helpful explanations of interesting phenomena, then they are often said to be models. Very simple models are said to be toy models (“Let’s consider a cow to be a perfect cube, and assume 4.2 is ten”). However, physicists do not always use the terms this way. Very often they use the terms “theory” and “model” interchangeably. For example, the Standard Model of particle physics is a model, but more accurately it would be said to be a theory in the primary sense used in this section. All physicists recognize this, but, for continuity with historical usage of the term, physicists have never bothered to replace the word “model” with “theory.”

For our fundamental theories of physics, the standard philosophical presupposition is that any dynamical law describes how the system evolves from a state at one time into a state at another time. All the dynamical laws in our fundamental theories of relativity and quantum mechanics are differential equations (or inequalities). These have infinitely many solutions that describe infinitely many situations. The equations are meant to be solved for a specific situation that provides the initial values or “initial conditions” (a.k.a. “boundary conditions”) for the variables within the equations. A single solution to the equations can be used as a prediction of how those values will change.

Most researchers say a theory ideally should tell us how the system being studied would behave under perturbations or small changes in the initial conditions, for example, if the initial density of Schwarzschild’s sphere were slightly changed and if the shape were not exactly spherical. Knowing how a system would behave under different conditions helps us understand the causal structure of the system, which is what philosophers also call the counterfactual structure. This structure, when understood, gives the physicist a “feel” for the equations and their solutions.

Theories of physics always contain a set of standard ways to link its statements to the real, physical world. A theory might link the variable “t” to time as measured with a standard clock, and link the constant “M” to the best measurement of the mass of the Earth. In general, the mathematics in mathematical physics is used to create mathematical representations of real entities and their states and behaviors. That is what makes it be an empirical science, unlike pure mathematics.

Do the laws of physics actually govern us? In Medieval Christian theology, the laws of nature were considered to be God’s commands, but today saying nature “obeys” scientific laws or saying that nature is “governed” by laws is considered by scientists to be a harmless metaphor. Scientific laws are called “laws” because they constrain what can happen; they imply this will happen and that will not. It was Pierre Laplace who first declared that the fundamental scientific laws should be hard and fast rules with no exceptions.

Are the basic laws of science just emergent patterns of the universe’s behavior that humans find discover and fine to be useful, or do the laws somehow pre-exist the universe and act to bring the universe into existence? Philosophers’ positions on laws divide into two camps, Humean and anti-Humean. Anti-Humeans consider scientific laws to be bringing nature forward into existence. It is as if laws are causal agents. Some anti-Humeans agree with Aristotle that whatever happens is because parts of the world have essences and natures, and the laws are describing these essences and natures. This position is commonly accepted in the manifest image. Humeans, on the other hand, consider scientific laws simply to be useful patterns among the mosaic of events that very probably will hold in the future. The patterns summarize the behavior of nature. The patterns do not “lay down the law for what must be.” In response to the question of why these patterns and not other patterns, some Humeans say they are patterns described with the most useful concepts for creatures with brains like ours (and other patterns might be more useful for extraterrestrials). More physicists are Humean than anti-Humean. More philosophers are anti-Humean than Humean. For a deeper discussion of these issues, see the article Laws of Nature.

All fundamental laws of relativity theory are time-reversible. Time-reversibility implies the fundamental laws do not notice any difference between the future direction and the past direction. The second law of thermodynamics does notice this difference because it says entropy tends to increase toward the future; so the theory of thermodynamics is not time-reversible, but it is also not a fundamental theory. As quantum mechanics is standardly interpreted, time-reversibility fails for quantum measurements; this issue is discussed in more detail in the section below on the theory of quantum mechanics.

Time-translation invariance is a meta-law that implies all instants are equivalent, that is, indistinguishable and that the laws on Tuesday are the same as the laws on Friday. This is not implying that if you bought an ice cream cone on Tuesday, you will buy one on Friday. All experts agree that the invariance holds over short time intervals, or at least is not significantly violated for periods less than a million years. A translation in time of 13.8 million years to a first moment, if there were such a moment, would be translation to a special moment with no earlier moment, so there is at least one exception to the claim that all moments are indistinguishable. A deeper question is whether any of the laws we have now might change in the future. The default answer is “no,” but this is just an educated guess. Any evidence that a fundamental law fails will be treated by some physicists as evidence that it was never a law to begin with, while it will be treated by others as proof that time-translation invariance fails. Hopefully a future consensus will be reached one way or the other.

Regarding the divide between science and pseudoscience, the leading answer is that:

what is really essential in order for a theory to be scientific is that some future information, such as observations or measurements, could plausibly cause a reasonable person to become either more or less confident of its validity. This is similar to Popper‘s criteria of falsifiability, while being less restrictive and more flexible (Dan Hooper).

a. The Core Theory

Some physical theories are fundamental, and some are not. Fundamental theories are foundational in the sense that not all their laws can be derived from the laws of other physical theories even in principle. For example, the second law of thermodynamics is not fundamental, nor are the laws of plate tectonics in geophysics or the law of natural selection in biology despite their being critically important to their respective sciences. The following two theories are fundamental in physics: (i) the general theory of relativity, and (ii) quantum mechanics. Their amalgamation is what Frank Wilczek called the Core Theory, the theory of everything physical except gravity. More specifically, the quantum mechanics used here is a version of quantum field theory that includes the Standard Model of elementary particle physics that describes the behavior of fundamental particles and all their forces or interactions other than gravity.

Nearly all scientists believe this Core Theory holds not just in our solar system, but all across the universe, and it held yesterday and will hold tomorrow. Wilczek claimed:

[T]he Core has such a proven record of success over an enormous range of applications that I can’t imagine people will ever want to junk it. I’ll go further: I think the Core provides a complete foundation for biology, chemistry, and stellar astrophysics that will never require modification. (Well, “never” is a long time. Let’s say for a few billion years.)

This implies one could think of biology as applied quantum theory.

The Core Theory uses the term time, but it does not use the terms time’s arrow or now. The concept of time in the Core Theory is primitive or “brute.” It is not definable. The Core Theory does not include the big bang theory or any other theory of cosmology.

What physicists do not yet understand is the collective behavior of the particles of the Core Theory—such as why some humans get cancer and others do not. But it is believed by nearly all physicists that however this collective behavior does get explained, doing so will not require any revision in the Core theory, and its principles will underlie any such explanation. Reductionists in physics would say other theories of physics can be reduced to the Core Theory, but not vice versa.

The key claim is that the Core Theory can be used in principle to adequately explain the behavior of people, galaxies, leaves, and molecules. The hedge phrase “in principle” is important. One cannot replace it with “in practice” or “practically.” Practically there are many limitations on the use of the Core Theory. Here are some of the limitations. Leaves are too complicated. There are too many layers of emergence needed from the level of the Core Theory to the level of leaf behavior. Also, there is a margin of error in any measurement of anything. There is no way to acquire the leaf data precisely enough to deduce the exact path of a specific leaf falling from a certain tree 300 years ago. Even if this data were available, the complexity of the needed calculations would be prohibitive. Commenting on these various practical limitations for the study of galaxies rather than leaves, the cosmologist Andrew Ponzen said “Ultimately, galaxies are less like machines and more like animals—loosely understandable, rewarding to study, but only partially predictable.”

The Core has been tested in many extreme circumstances and with great sensitivity, so physicists have high confidence in it. There is no doubt that for the purposes of doing physics the Core Theory provides a demonstrably superior representation of reality to that provided by its alternatives.

But all physicists know the Core is not strictly true and complete, and they know that some features will need revision—revision in the sense of being modified or extended. Physicists are motivated to discover how to revise it because such a discovery can lead to great praise from the rest of the physics community. Nobel Prizes would be won. Wilczek says the Core will never need modification for understanding (in principle) the special sciences of biology, chemistry, stellar astrophysics, computer science and engineering, but he would agree that the Core needs revision in order to adequately explain why 95 percent of the universe consists of dark energy, why the universe has more matter than antimatter, why neutrinos change their identity over time, and why the energy of empty space is as small as it is. One philosophical presupposition here is that the new Core Theory should be a single, logically consistent theory. Some physicists emphasize that this presupposition has the epistemological status of a hope.

The Core Theory presupposes that time exists, that it is a feature of space-time, and that space-time is more fundamental than time. Within the Core Theory, relativity theory allows space to curve, ripple, and expand; and this curving, rippling, and expanding can vary from one time to another and from one place to another. Space could even have the shape of a doughnut (a torus). Quantum theory does not allow any of these features, although a future revision of quantum theory within the Core Theory is expected to allow all of them.

In the Core Theory, the word time is a theoretical term, and time is treated somewhat like a single dimension of space. Informally, space is the set of all point-locations, and time is the set of all point-times, what are informally called the instants. Space-time is a set of all point-events. Space-time is presumed to have a minimum of four-dimensions and also to be a continuum, with time being represented as a distinguished, one-dimensional sub-space of space-time. But time is not a spatial dimension. Because the time dimension is so different from a space dimension, physicists often say space-time is (3+1)-dimensional rather than 4-dimensional.

Both relativity theory and quantum theory presuppose that three-dimensional space is isotropic (rotation symmetric) and homogeneous (spatial-translation symmetric) and time-translation symmetric (yesterday’s laws are tomorrow’s laws). However, some results in the 21st century in cosmology cast doubt on this latter symmetry; there may be exceptions. Regarding all these symmetries, the laws need to obey the symmetries, but specific physical systems do not. For example, your body is a physical system that could become very different if you walk across the road at noon on Tuesday instead of Friday, even though the Tuesday physical laws are also the Friday laws.

The Core Theory presupposes that all dynamical laws should have the form of describing how a state of a system at one time turns into a different state at another time. This implies that taking into account the entire history of past states is not required to make a claim about what will happen next.

The Core Theory does not presuppose or explicitly mention consciousness. The typical physicist believes consciousness is contingent; it happens to exist but it is not a necessary feature of the universe. That is, consciousness happened to evolve because of fortuitous circumstances, but it might not have. Many philosophers throughout history have disagreed with this treatment of consciousness, especially the idealist philosophers of the 19th century.

[For the experts: More technically, the Core Theory is the renormalized, effective quantum field theory that includes both the Standard Model of particle physics and the weak field limit of Einstein’s General Theory of Relativity in which gravity is very weak and space-time is almost flat, and no assumption is made about the character or even the existence of space and time below the Planck length and Planck time.]

2. Relativity Theory

Of all the theories of science, relativity theory has had the greatest impact upon our understanding of the nature of time. According to this theory, time can curve and stretch. Time is also strange because it has no independent, objective existence apart from a more fundamental entity called “four-dimensional space-time.” Einstein’s theory rejects Newton’s assumption that time behaves the same way for everyone everywhere.

When the term relativity theory is used, it usually refers to the general theory of relativity of 1915, but sometimes it refers to the special theory of relativity of 1905, and sometimes it refers to both, so one needs to be alert to what is being referred to. Both theories are theories of time. Both have been well-tested; and they are almost universally accepted among physicists as applying correctly to those situations in reality in which their assumptions are true. Today’s physicists understand them better than Einstein himself did. “Einstein’s twentieth-century laws, which—in the realm of strong gravity—began as speculation, became an educated guess when observational data started rolling in, and by 1980, with ever-improving observations, evolved into truth” (Kip Thorne). Strong gravity, but not too strong. In the presence of extremely strong gravity, such as found near the center of a black hole, general relativity theory is believed to break down.

Special relativity is not a specific theory but rather a general framework for theories. General relativity is a generalization of special relativity that removes its restrictions to uncurved space-time and to there being no gravitational forces.

Overall, the main difference between the two is that, in general relativity, space-time does not simply exist passively as a background arena for events. Instead, space-time is dynamical in the sense that changes in the distribution of matter and energy in any region of space-time are directly related to changes in the curvature of space-time in that region. John Wheeler summarized this point by saying that space-time tells matter how to move and matter tells space-time how to curve.

Einstein’s key equations in his general theory imply that energy and mass distort the geometry of space-time, and as the distribution of energy and mass changes, so does the geometry. Although the Einstein field equations in his general theory:

are exceedingly difficult to manipulate, they are conceptually fairly simple. At their heart, they relate two things: the distribution of energy in space, and the geometry of space and time. From either one of these two things, you can—at least in principle—work out what the other has to be. So, from the way that mass and other energy is distributed in space, one can use Einstein’s equations to determine the geometry of that space, And from that geometry, we can calculate how objects will move through it (Dan Hooper).

The main article “Time” introduced the differing viewpoints of Einstein and Bergson on the nature of time. There is a gray area about whether the two disagreed about what time is or instead disagreed about whether their own perspective on time was more important, or more useful, or more fundamental than their opponent’s. Here are some helpful remarks on this gray area: “For Einstein, scientific time is the only time that matters and the only time we can rely on. Bergson, however, believes that scientific time is derived by abstraction, even in the sense of extraction, from a more fundamental time. The plurality of times envisaged by the theory of Relativity does not, for him, contradict the philosophical intuition of the existence of a single time” (from the publisher of the book Einstein vs. Bergson: An Enduring Quarrel on Time by Alessandra Campo and Simone Gozzano).

An important assumption of general relativity theory (GR) is the principle of equivalence: gravity is basically acceleration. That is, gravitational forces cannot be distinguished from forces produced by acceleration, all other things being equal. That is why, when you are in an elevator with the doors closed and the elevator is accelerating away from Earth at the right rate, it can feel exactly like you are in an elevator that is not moving. Einstein said the discovery of this principle was the happiest moment of his life.

GR has many assumptions that are usually never mentioned explicitly. One is that gravity did not turn off for three seconds during the year 1777 in Australia. A more general one is that the theory’s fundamental laws are the same regardless of what time it is. This feature is called time-translation invariance. Everyone believes this principle holds locally, but many cosmologists believe this feature does not hold for translations on the order of millions of years as the expansion of space becomes significant.

The special theory is inconsistent with Newton’s law of gravity. Einstein’s theory of general relativity was his solution to that problem of inconsistency.

The relationship between the special and general theories is slightly complicated. Both theories are about the motion of objects and both approach agreement with Newton’s theory the slower the speed of those objects, and the weaker the gravitational forces involved, and the lower the energy of those objects. General relativity implies the truth of special relativity in all infinitesimal regions of space-time. General relativity holds in all reference frames, but special relativity holds only for inertial reference frames, namely non-accelerating frames. The frame does not accelerate, but objects in the frame are allowed to accelerate. Special relativity implies the laws of physics are the same for all inertial observers, that is, observers who are moving at a constant velocity relative to each other. ‘Observers’ in this sense are also the frames of reference themselves, or they are persons of zero mass and volume making measurements from a stationary position in a coordinate system. These observers need not be conscious beings.

Special relativity allows objects to have mass but not gravity. Also, it always requires a flat geometry—that is, a three-dimensional Euclidean geometry for space and a Minkowskian four-dimensional non-Euclidean geometry for space-time. General relativity does not have those restrictions on geometry. And whereas special relativity is a framework for specific theories, general relativity is a very specific theory of gravity, or it is a specific theory if we add in a specification of the distribution of matter-energy throughout the universe. Both the special and general theory imply that Newton’s two main laws of F = ma and F = GmM/r2 hold only approximately, and they hold better for slower speeds and weaker gravitational strengths.

General relativity is geometric. What this means is that when an artillery shell flies through the air and takes a curved path in space relative to the ground because of a gravitational force acting upon it, what is really going on is that the artillery shell is taking a geodesic or the straightest path of least energy in space-time, which is a curved path as viewed from a higher space dimension. That is why gravity or gravitational attraction is not really a force but rather is a curvature of space-time.

The theory of relativity is generally considered to be based on causality. What this means is that:

One can take general relativity, and if you ask what in that sophisticated mathematics is it really asserting about the nature of space and time, what it is asserting about space and time is that the most fundamental relationships are relationships of causality. This is the modern way of understanding Einstein’s theory of general relativity….If you write down a list of all the causal relations between all the events in the universe, you describe the geometry of spacetime almost completely. There is still a little bit of information that you have to put in, which is counting, which is how many events take place…. Causality is the fundamental aspect of time. (Lee Smolin).

(An aside for the experts: The general theory of relativity requires space-time to have at least four dimensions, not exactly four dimensions. Technically, any space-time, no matter how many dimensions it has, is required to be a differentiable manifold with a metric tensor field defined on it that tells what geometry it has at each point. General relativistic space-times are manifolds built from charts involving open subsets of R4. General relativity does not consider a time to be a set of simultaneous events that do or could occur at that time; that is a Leibnizian conception. Instead, general relativity specifies a time in terms of the light cone structures at each place. A light cone at a space-time point specifies what events could be causally related to that point, not just what events are causally related to it.)

Relativity theory implies time is a continuum of instantaneous times that is free of gaps just as the mathematical line is free of gaps between points. This continuity of time was first emphasized by the philosopher John Locke in the late seventeenth century, but it is meant here in a more detailed, technical sense that was developed for calculus only toward the end of the 19th century.

According to both relativity theory and quantum theory, time is not discrete or quantized or atomistic. Instead, the structure of point-times is a linear continuum with the same structure as the mathematical line or the real numbers in their natural order. For any point of time, there is no next time because the times are packed together so tightly. Time’s being a continuum implies that there is a non-denumerably infinite number of point-times between any two non-simultaneous point-times. Some philosophers of science have objected that this number is “too large,” and we should use Aristotle’s notion of potential infinity and not the late 19th century notion of a completed infinity. Nevertheless, accepting the notion of an actual nondenumerable infinity is the key idea used to solve Zeno’s Paradoxes and to remove inconsistencies in calculus, so for these reasons the number of point-events is not considered to be “too large.”

The fundamental laws of physics assume the universe is a collection of point events that form a four-dimensional continuum, and the laws tell us what happens after something else happens or because it happens. These laws describe change but do not themselves change. At least that is what laws are in the first quarter of the 21st century, but one cannot know a priori that this is always how laws must be. Even though the continuum assumption is not absolutely necessary for describing what we observe, so far it has proved to be too cumbersome to revise our theories in order to remove the assumption while retaining consistency with all our experimental data. Calculus has proven its worth.

No experiment has directly revealed the continuum structure of time. No experiment is so fine-grained that it could show point-times to be infinitesimally close together, although there are possible experiments that could show the assumption to be false if it were false and if the graininess of time were to be large enough.

Not only is there much doubt about the correctness of relativity in the tiniest realms, there is also uncertainty about whether it works differently on cosmological scales than it does at the scale of atoms, houses, and solar systems, but so far there are no rival theories that have been confirmed.

A rival theory intended to incorporate into relativity theory what is correct about the quantum realm is often called a theory of quantum gravity. Einstein claimed in 1916 that his general theory of relativity needed to be replaced by a theory of quantum gravity. The physics community generally agrees with him, but that theory has not been found so far. A great many physicists of the 21st century believe a successful theory of quantum gravity will require quantizing time. But this is just an educated guess. The majority view is that a quantum of gravity, the graviton particle, does exist, but it just has not yet been detected.

Regardless of whether there are or are not gravitons, the question remains as to whether there are or are not atoms of time. If there are, then there can be a next instant and a previous instant. It is conjectured that, if time were discrete, then a good estimate for a shortest duration is 10-44 seconds, the so-called Planck time. The Planck time is the time it takes light to traverse one Planck length. No physicist can yet suggest a practical experiment that is sensitive to this tiny scale. For more discussion, see (Tegmark 2017).

The special and general theories of relativity imply that to place a reference frame upon space-time is to make a choice about which part of space-time is the space part and which is the time part. No choice is objectively correct, although some choices are very much more convenient for some purposes. This relativity of time is one of the most significant philosophical implications of both the special and general theories of relativity.

Since the discovery of relativity theory, scientists have come to believe that any objective description of the world can be made only with statements that are invariant under changes in the reference frame. That is why saying, “It occurred at noon” does not have a truth value unless a specific reference frame is implied, such as one fixed to Earth with time being the time that is measured by our civilization’s standard clock. This relativity of time to reference frames is behind the remark that Einstein’s theories of relativity imply time itself is not objectively real whereas space-time is.

Regarding relativity to frame, Newton would say that if you are seated in a vehicle moving along a road, then your speed relative to the vehicle is zero, but your speed relative to the road is not zero. Einstein would agree. However, he would surprise Newton by saying the length of your vehicle is slightly different in the two reference frames, the one in which the vehicle is stationary and the one in which the road is stationary. Equally surprising to Newton, the duration of the event of your drinking a cup of coffee while in the vehicle is slightly different in those two reference frames. These relativistic effects are called space contraction and time dilation, respectively. Both length and duration are frame dependent and, for that reason, say physicists, they are not objectively real characteristics of objects. Neither are the shapes of objects. Speeds also are relative to reference frame, with one exception. The speed of light in a vacuum has the same value c in all frames that are allowed by relativity theory. Space contraction and time dilation change in tandem so that the speed of light in a vacuum is always the same number. Convincing evidence for time dilation was first discovered in 1938 by Ives and Stilwell.

Another surprise for Newton would be to learn that relativity theory implies he was mistaken to believe in the possibility of arbitrarily high velocities. According to relativity theory, nothing that once went slower than the speed c (the speed of light in a vacuum) can go faster than c, regardless of the reference frame. This is an interesting fact about time because speed is distance per unit of time. The constant c in the equation E = mc2 is called “the speed of light,” but the constant c is not only the speed of light. According to relativity theory, it is the maximum speed of any causal influence, light or no light. This speed limit applies to all forms of matter, energy, and information.

It is not quite correct to say that, according to relativity theory, nothing can go faster than the speed of light. The remark needs some clarification, else it is incorrect. Here are four ways to go faster than the speed of light. (1) First, the medium needs to be specified. c is the speed of light in a vacuum. The speed of light in certain crystals can be much less than c, say 40 miles per hour, and if so, then a racehorse outside the crystal could outrun the light beam in the crystal. (2) Second, the limit c applies only to objects within space relative to other objects within space. However, the general theory of relativity places no restrictions on how fast an object can go that has always been going faster than c. (3) GR allows space itself to expand so that two clusters of galaxies have a relative speed of recession greater than c if the intervening space expands sufficiently rapidly. (4) A shadow can go faster than c. For example, imagine you hold a laser on Earth that casts a cone of light upon Jupiter. Move a finger on your other hand quickly through the beam. Your finger’s shadow on Jupiter moves across Jupiter faster than c. When this happens, no information or photon moves faster than c. So, when we say nothing can go faster than light, we need a precise definition of “nothing.” Is a shadow nothing?

Technically expressed, Einstein’s point above is that no physical event has causes or effects outside the event’s backward or forward light cones. That is, no physical event is space-like separated from its causes or effects.

Some physicists believe Einstein is mistaken about this, and they believe the assumption that nothing goes faster than light will eventually be shown to be false because, in order to make sense of Bell’s Theorem in quantum theory, two entangled particles must be able to affect each other faster than this, perhaps instantaneously. The majority of physicists are unconvinced.

The notion of a reference frame in general relativity is a bit different from that in special relativity or classical physics. In general relativity a time is indicated by slicing space-time into surfaces of simultaneity.

Perhaps the most philosophically controversial feature of relativity theory is that it allows great latitude in selecting the classes of simultaneous events, as shown in this diagram. Because there is no single objectively-correct frame to use for specifying which events are present and which are past—but only more or less convenient ones—one philosophical implication of the relativity of time is that it seems to be easier to defend McTaggart’s B theory of time and more difficult to defend McTaggart’s A-theory. The A-theory implies the temporal properties of events such as “is happening now” or “happened in the past two weeks ago” are intrinsic to the events and are objective, frame-free properties of those events. So, Einstein’s relativity to frame makes it difficult to defend absolute time and the A-theory.

Relativity theory challenges other ingredients of the manifest image of time. For two point-events A and B, common sense says they either are simultaneous or not, but according to relativity theory, if A and B are distant enough from each other and occur close enough in time to be within each other’s absolute elsewhere, then event A can occur before event B in one reference frame, but after B in another frame, and simultaneously with B in yet another frame. To make the same point in other terminology, for two events that are spacelike separated, there is no fact of the matter regarding which occurred before which. Their temporal ordering is indeterminate. In the language of McTaggart’s A and B theory, unlike for the A-series ordering of events, there are multiple B-series orderings of events, and no single one is correct. No person before Einstein ever imagined time is so strange. Not all temporal ordering is relative, though, only the temporal ordering of events that are spacelike separated, so neither of the two events could have caused the other, even partially.

The special and general theories of relativity provide accurate descriptions of the world when their assumptions are satisfied. Both have been carefully tested. One of the simplest tests of special relativity is to show that the characteristic half-life of a specific radioactive material is longer when it is moving faster.

The special theory does not mention gravity, and it assumes there is no curvature to space-time, but the general theory requires curvature in the presence of mass and energy, and it requires the curvature to change as their distribution changes. The presence of gravity in the general theory has enabled the theory to be used to explain phenomena that cannot be explained with either special relativity or Newton’s theory of gravity or Maxwell’s theory of electromagnetism.

The equations of general relativity are much more complicated than are those of special relativity. To give one example of this, the special theory clearly implies there is no time travel to events in one’s own past. Experts do not agree on whether the general theory has this same implication because the equations involving the phenomena are too complex for them to solve directly. A slight majority of physicists do believe time travel to the past is allowed by general relativity. Because of the complexity of Einstein’s equations, all kinds of tricks of simplification and approximation are needed in order to use the laws of the theory on a computer for all but the simplest situations. Approximate solutions are a practical necessity.

Regarding curvature of time and of space, the presence of mass at a point implies intrinsic space-time curvature at that point, but not all space-time curvature implies the presence of mass. Empty space-time can still have curvature, according to general relativity theory. This unintuitive point has been interpreted by many philosophers as a good reason to reject Leibniz’s classical relationism. That claim was first made by Arthur Eddington.

Two accurate, synchronized clocks do not stay synchronized if they undergo different gravitational forces. This is a second kind of time dilation, in addition to dilation due to speed. So, a clock’s time depends on the clock’s history of both speed and gravitational influence. Gravitational time dilation would be especially apparent if a clock were to approach a black hole. The rate of ticking of a clock approaching the black hole slows radically upon approach to the horizon of the hole as judged by the rate of a clock that remains safely back on Earth. This slowing is sometimes misleadingly described as “time slowing down,” but this metaphor may misleading suggest that time itself has a rate, which it doesn’t. After a clock falls through the event horizon, it can still report its values to a distant Earth, and when it reaches the center of the hole not only does it stop ticking, but it also reaches the end of time, the end of its proper time.

The general theory of relativity theory has additional implications for time. It implies that space-time can curve or warp locally or cosmically, and it can vibrate or jiggle. Whether it curves into a real fourth spatial dimension is unknown, but it definitely curves as if it were curving into such an extra dimension. Here is a common representation of the situation which pictures our 3D space from the outside reference frame as being a 2D curved surface that ends infinitely deep at a point of infinite mass density, the hole’s singularity.

This picture is helpful in many ways, but it can also be misleading because space need not really be curved into this extra dimension that goes downward in the diagram, but the 2D space does really need to be compacted more and more as one approaches the black hole’s center. That is, the picture implies there are more dimensions than there really are.

Let’s explore the microstructure of time in more detail, beginning with the distinction between continuous and discrete space. In the mathematical physics that is used in both relativity theory and quantum theory, the ordering of instants by the happens-before relation of temporal precedence is complete in the sense that there are no gaps in the sequence of instants. Any interval of time is a continuum, so the points of time form a linear continuum. Unlike physical objects, physical time and physical space are believed to be infinitely divisible—that is, divisible in the sense of the actually infinite, not merely in Aristotle’s sense of potentially infinite. Regarding the density of instants, the ordered instants are so densely packed that between any two there is a third so that no instant has a very next instant. Regarding continuity, time’s being a linear continuum implies that there is a nondenumerable infinity of instants between any two non-simultaneous instants.

The actual temporal structure of events can be embedded in the real numbers, at least locally, but how about the converse? That is, to what extent is it known that the real numbers can be adequately embedded into the structure of the instants, at least locally? This question is asking for the justification of saying time is not atomistic. The problem here is that the shortest duration ever measured is about 250 zeptoseconds. A zeptosecond is 10-21 second. For times shorter than about 10-43 second, which is the physicists’ favored candidate for the duration of an atom of time, science has no experimental grounds for the claim that between any two events there is a third. Instead, the justification of saying the reals can be embedded into the structure of the instants is that (i) the assumption of continuity is very useful because it allows the mathematical methods of calculus to be used in the physics of time; (ii) there are no known inconsistencies due to making this assumption; and (iii) there are no better theories available. The qualification earlier in this paragraph about “at least locally” is there in case there is time travel to the past. A circle is continuous and one-dimensional, but it is like the real numbers only locally.

One can imagine two empirical tests that would reveal time’s discreteness if it were discrete—(1) being unable to measure a duration shorter than some experimental minimum despite repeated tries, yet expecting that a smaller duration should be detectable with current equipment if there really is a smaller duration, and (2) detecting a small breakdown of Lorentz invariance. But if any experimental result that purportedly shows discreteness is going to resist being treated as a mere anomaly, perhaps due to there somehow being an error in the measurement apparatus, then it should be backed up with a confirmed theory that implies the value for the duration of the atom of time. This situation is an instance of the kernel of truth in the physics joke that no observation is to be trusted until it is backed up by theory.

The General Theory of Relativity implies gravitational waves will be produced by any acceleration of matter. Drop a ball from the Leaning Tower of Pisa, and this will shake space-time near the tower and produce ripples that will emanate in all directions from the Tower. The existence of these ripples was confirmed in 2015 by the LIGO observatory (Laser Interferometer Gravitational-Wave Observatory) when it detected ripples caused by the merger of two black holes.

For more helpful material about special relativity, see Special Relativity: Proper Times, Coordinate Systems, and Lorentz Transformations.

3. Quantum Mechanics

In addition to relativity theory, the other fundamental theory of physics is quantum mechanics. It was created in the late 1920s. At that time, it was applied to particles and not to fields. In the 1970s, it also was successfully applied to quantum fields via the new version of the theory called “quantum field theory.” This made particles become emergent features of fields. If we can trust contemporary quantum mechanics to apply all across the universe and not just to the universe’s “quantum region” as opposed to its “classical region” (as most 21st century philosophers of physics do believe despite the fact that quantum theory was never conceived in its infancy as a theory for cosmically large scales), then quantum mechanics tells us the underlying ontology of nature, and it implies particles are no longer ontologically basic; fields are. It also implies that information is never destroyed.

The term “quantum mechanics” is commonly used to mean either the classical theory of the 1920s or the improved quantum theory that includes quantum field theory with its Standard Model of particle physics. Context is usually needed in order to tell what the term “quantum mechanics” refers to.

What kind of world is quantum mechanics describing for us? What does it imply about time? There is considerable agreement among the experts that quantum mechanics has deep implications about the nature of time, but there is considerable disagreement among the experts regarding what those implications are.

The three strangest features of quantum mechanical phenomena are its randomness, superposition, and entanglement. We do not notice any of these strange phenomena in our ordinary lives outside a physics laboratory, but they underlie our reality if quantum theory is true. The following sections explore these phenomena plus some other aspects of quantum mechanics.

A surprising feature of quantum theory is that there is no absolute stillness in the universe. Everything jitters or shimmers; both fields and particles. This comment about jittering can be helpful but also misleading. Strictly speaking the wave function that describes the state of any closed system does not jitter; it always has a definite value. It is just that measurements must be described probabilistically in quantum mechanics, and if you were to repeat a measurement of a variable that can be measured you will get a range of different values over time. In this sense, the values of the quantum system’s variables “hop around.” There is more discussion of this feature in the sub-section below on the measurement problem.

Quantum mechanics has multiple competing interpretations regarding how it should be understood and how it should be used in answering the question, “What is really going on?” The interpretations are not simply different attitudes about quantum mechanics nor mere semantic disagreements; they are different attempts to build a fuller theory of quantum mechanics. So, a more accurate label for an interpretation would be “a theory” or perhaps “an outline of a more complete theory.” If the different interpretations did not lead to disagreements about what could in principle be observed, they would be attacked by Leibniz for violating his Principle of the Identity of Indiscernibles, which says to eliminate ontological differences that do not have empirical differences.

a. Statistical Predictions

In the standard Copenhagen interpretation and nearly all competing interpretations, time is treated as being a continuum, just as it is in relativity theory and Newtonian theory, but change over time is treated in quantum mechanics very differently than in all previous theories—because of (1) quantum discreteness and (2) instantaneous, discontinuous collapse of the wave function during any measurement, a collapse from many possibilities to a single, actual one.

Although this is a disputed ontological claim, many experts say the wave function of quantum mechanics is an accurate description of a physical system at a single time. It is analogous to what other theories call the state of the system. During a measurement of some property of the system, according to the main interpretation, the wave function collapses or updates from a superposition of multiple possible states of the system to a single state with the measured variable having a single value. For example, an electronic system is created to have 2, 3, 4, or 5 volts in a wire of a certain electrical device, and during the measurement the wave function which was in a superposition of four possible states changes abruptly to a single state in which the voltage is 3 volts. The measurer then says the voltage was measured to have 3 volts (with a probability of one), whereas the same measurer might only be able to say before the measurement that the voltage had a probability of 1/4 of being 3 volts. According to the standard interpretation of quantum mechanics, there is nothing that fixes in advance the outcome of the measurement, so nature requires statistics at its most fundamental level. As Einstein once expressed it, God plays dice.

b. Quantum Leaps, Quantum Waves, and Duality

Quantum mechanics is often said to be our theory of small things, and relativity is our theory of large things. These are crude remarks since quantum mechanics applies to everything, but in their day-to-day work most physicists do not need to think about quantum mechanics if they are considering phenomena larger than a nanometer.

Quantum mechanics says many phenomena are discrete, but not all. This discreteness is not shown directly in the equations, but rather in two other ways:

(1) Quantum mechanics represents every physical system as a wave, even an atom or an airplane or the entire universe; but for any wave there is a smallest possible amplitude it can have, called a “quantum.” Smaller amplitudes simply do not occur. Classical physics does not have this intuitively odd feature. As Hawking quipped: “It is a bit like saying that you can’t buy sugar loose in the supermarket, it has to be in kilogram bags.” Max Planck first suggested the concept of the quantum as a useful mathematical tool, but it was Einstein who first took the ontological leap and argued that quanta needed to be considered a feature of reality.

(2) The possible solutions to some of the equations of quantum mechanics form a discrete set, not a continuous one. For example, the possible values of certain variables such as energy states of an electron confined within an atom are allowed by the equations to have values that change to another value only in multiples of minimum discrete steps in a shortest time. Changing by a single step is sometimes called a “quantum jump” or “quantum leap.” When applying the quantum equation to a system containing only a single electron bound to a hydrogen atom, the solutions imply the electron can have -13.6 electron volts of energy or -3.4 electron volts of energy, but no value between those two. This illustrates how energy levels are quantized. However, in the equation, the time variable is continuous and so not quantized.

The variety of phenomena that quantum mechanics can be used to successfully explain is remarkable. For four examples, it explains: (1) why you can see through a glass window but not a potato, (2) why our Sun has lived so long without burning out, (3) why atoms are stable so that the negatively-charged electrons of an atom do not spiral into the positively-charged nucleus, and (4) why the periodic table of elements has the structure it has. Without quantum mechanics, these four facts (and many others) are brute facts of nature.

Quantum mechanics is our most successful theory in all of science. One especially important success is that the theory has been used to predict the measured value of the anomalous magnetic moment of the electron extremely precisely and accurately. This value is a measure of how much the electron wobbles when traveling through a magnetic field. The predicted value, expressed in terms of a certain number g, is the real number:

g/2 = 1.001 159 652 180 73….

The measured value agrees with this predicted value to this many decimal places. No similar feat of precision and accuracy can be accomplished by any other theory of science.

But quantum mechanics has been tested only for small systems. It has never been tested for cosmic systems such as the universe or the observable universe, yet it is assumed to hold for these cosmic systems.

Under the right physical conditions such as when dealing with a large object (a macroscopic object), quantum theory gives the same results as classical Newtonian theory. In 1927, Paul Ehrenfest first figured out how to deduce (or morph into) Newton’s second law of mechanics F = ma from the corresponding equation in quantum mechanics, namely the Schrödinger equation. That is, he showed under what conditions you can get classical mechanics from quantum mechanics.

The ontology of a theory is what it says exists fundamentally. Regarding the effect of quantum theory on ontology, the majority viewpoint among philosophers of physics in the twenty-first century is that potatoes, galaxies, and brains are fairly stable patterns over time of interacting quantized fields. So, every entity except these fields is an emergent entity. Also, the multi-decade debate about whether an electron is a point object or instead an object with a definite non-zero cross-section (width) has been settled by quantum field theory. It is neither. An electron has no definite diameter; it takes up all of space. It is a “bump” or “packet of waves” that vibrates a million billion times every second and that has a narrow peak with relatively high amplitude that trails off to trivially lower and lower amplitude throughout the electron field; yet that field fills all of space. A sudden disturbance in a field will cause wave packets to form, thus permitting particle creation in the field. Until quantum field theory was accepted, the fact of particle creation was a mysterious brute fact.

If we are “sticklers” and use a definition that requires a fundamental particle to be an object with a precise, finite location, then quantum mechanics now implies there are no fundamental particles. For continuity with the past, particle physicists are not sticklers. They still do call themselves “particle physicists” and do say they study “particles”; but they know this is not what is really going on. The term is not intended to be taken literally or in the sense of the classical physics of relativity or Newtonian theory. Similarly, the term “forces” is commonly-used by physicists even though they know the word does not mean to them what it means to ordinary persons or to classical physicists because a force is really an interaction involving complex particle exchange. Very often, though, the force-talk and particle-talk are useful to physicists and their popularizers because the terms succeed in conveying an idea well enough while avoiding needless complexities.

Scientists sometimes say “Almost everything physical is known to be made of quantum fields.” The hedge word “almost” is there because they mean everything physical except gravity. No treatment of gravity in terms of quantum fields (as of 2026) is known to be successful.

The principal scientific problem about quantum mechanics is that it is consistent with special relativity but inconsistent with general relativity, which is our theory of gravity, yet physicists have a high degree of trust in all these theories. Here are three examples of the inconsistency. (i) General relativity theory implies black holes have point-size singularities within themselves, and quantum theory implies they do not. (ii) General relativity implies black holes are black, and quantum theory implies they shine with Hawking radiation. (iii) General relativity implies that the universe had zero volume at the Big Bang, and quantum theory implies it did not.

Quantum mechanics is well tested and relatively well understand mathematically, yet it is not well understood intuitively or informally or philosophically or conceptually. Its requirements on reality are very difficult to visualize. This is what Richard Feynman, one of the founders of quantum field theory, meant when he said he did not really understand his own theory. Some experts draw the conclusion from this that human language is not a very good descriptive tool for nature because mathematical physics is so much better.

Surprisingly, because of competing interpretations, physicists still do not agree on the exact formulation of the theory and how it should be applied to the world. They do not agree on what quantum theory’s axioms would be if it were to be axiomatized. This failure stands in the way of solving problem 6 of David Hilbert’s list of 23 open problems in mathematics that he said in 1900 need to be solved in coming centuries. Problem 6 is the problem of formalizing all accepted scientific theories.

Quantum mechanics has many interpretations, but there is a problem. “New interpretations appear every year. None ever disappear,” said physicist N. David Mermin. His joke has a point. This supplement article describes only four of the many different interpretations: the Copenhagen Interpretation, the Hidden Variables Interpretation, the Many-Worlds Interpretation, and the Objective Collapse Interpretation. The Copenhagen Interpretation has a strong plurality of supporters, about one-third, but not a majority. It is the “classical” interpretation.

During the 20th century, most physicists resisted the need to address the question “What is really going on in quantum mechanics?” Their mantra was “Shut up and calculate” and do not explore the philosophical questions involving quantum mechanics. Abandon thoughts about ontology. Discussion of the philosophical questions did not appear in college textbooks. Turning away from this head-in-the-sand approach, Andrei Linde, said, “We [theoretical physicists] need to learn how to ask correct questions, and the very fact that we are forced right now to ask…questions that were previously considered to be metaphysical, I think, is to the great benefit of all of us.”

If we want to use quantum mechanics to describe the behavior of a system of particles over time, then we start with the system’s initial state such as its wave function Ψ(x,t) for each point x of space for the initial instant t and then compute the wave function for other places and times. The function is a function of all the possible values of all the possible particles or fields at a location and time of a closed system. Equivalently it is a function of the “configuration space. When a measurement is made, we use the resulting value to update the wave function. It is an open question in ontology whether the wave function is a direct description of reality rather than merely a mathematical tool for making correct predictions about measurements.

Max Born, one of the fathers of quantum mechanics, first suggested interpreting quantum waves not literally as waves in space and time but rather as waves of probability affecting our knowledge and so not as something objective. Stephen Hawking explained it this way:

In quantum mechanics, particles don’t have well-defined positions and speeds. Instead, they are represented by what is called a wave function. This is a number at each point of space. The size of the wave function gives the probability that the particle will be found in that position. The rate at which the wave function varies from point to point gives the speed of the particle. One can have a wave function that is very strongly peaked in a small region. This will mean that the uncertainty in position is small. But the wave function will vary very rapidly near the peak, up on one side and down on the other. Thus the uncertainty in the speed will be large. Similarly, one can have wave functions where the uncertainty in the speed is small but the uncertainty in the position is large.

The wave function contains all that one can know of the particle, both its position and its speed. If you know the wave function at one time, then its values at other times are determined by what is called the Schrödinger equation. Thus one still has a kind of determinism, but it is not the sort that Laplace envisaged (Hawking 2018, 95-96).

Given a wave function at one time, we insert this into the Schrödinger wave equation that says how the wave function changes over time. That equation is the partial differential equation:

The letter i abbreviates the square root of negative one. h-bar is Planck’s constant divided by 2π. H is the Hamiltonian operator on the wave function Ψ. This Schrödinger wave equation is the quantum version of Newton’s three laws of motion, and it indicates the rate of change of the system and what it changes into. Knowing the Hamiltonian of the quantum mechanical system is analogous to knowing the forces involved in a system obeying Newtonian mechanics. The abstract space (or arrangement) of all possible wave functions is called Hilbert Space.

The probabilities are computed by “squaring” the wave function. In our example, the state Ψ can be used to show the probability p(x,t) that a certain particle will be measured to be at place x at a future time t, if a measurement were to be made, where

p(x,t) = Ψ*(x,t)Ψ(x,t).

The values of psi are complex numbers. Quantum mechanics requires complex numbers; it cannot be formulated just with real numbers. The asterisk designates a complex conjugate operator, so the “squaring” is an exotic squaring, but let’s not delve any more into the mathematical details. The equation is called the Born Rule. The Born rule connects the abstract wave function to actual probabilities of measurements of the system’s behavior. It connects theory to nature.

Experimentally, the wave function can be sampled, but not measured overall. For that reason, the wave function used in practice is always an estimate. The formulation of the function Ψ has been improved since the days of Schrödinger because of advances in developing quantum field theory and its Standard Model of particle physics. But the Schrödinger equation itself has not changed.

An important feature of the quantum state Ψ is that you, the measurer, cannot measure it without disturbing it and altering its properties. “Without disturbing it” means “without collapsing the wave function.”

Before Einstein, almost all physicists believed being a particle and being a wave are mutually exclusive properties. Yet, on most interpretations of quantum mechanics (but not on the Bohm interpretation), fundamental particles are considered to be “wavicles,” namely entities that have both a wave and a particle nature, but which are never truly either because the two properties are mutually exclusive. This dual feature of nature is called “wave-particle duality.” There is a serious tension in the two features that shows itself when we consider a light beam because a light wave can split in two, but an individual photon cannot.

In 1909, Einstein was the first person to emphasize the wave-particle duality of light as a real feature of nature. This influenced De Broglie’s idea of the wave-particle duality for electrons and other material particles, and not just for light.

The electron that once was conceived to be a tiny particle orbiting an atomic nucleus is now better conceived as something larger and less precisely defined spatially; the electron is a cloud that completely surrounds the nucleus, a cloud of possible places where the electron is most likely to be found if it were to be measured. The electron or any other particle is no longer well-conceived as having either a sharply defined edge or a sharply defined trajectory. A wave has no single location and it cannot have a single, sharp, well-defined trajectory. The location and density distribution of the electron cloud around an atom is the product of two opposite tendencies: (1) the electron wave “wants” to spread out away from the nucleus just as a water wave wants to spread out away from the point where the stone fell into the pond, and (2) the electron-qua-particle is a negatively-charged particle that “wants” to reach the positive electric charge of the nucleus because opposite charges attract.

c. The Copenhagen Interpretation and Complementarity

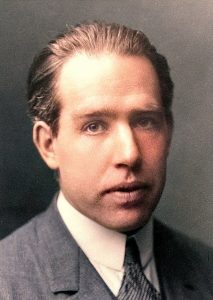

The Copenhagen Interpretation has become the orthodox interpretation of quantum mechanics. Unfortunately, it is vague. It contains an assortment of beliefs about what physicists are supposed to do with the mathematical formalism of quantum mechanics and how they should conceptualize its features. Most significantly for philosophers, it implies reality is observer-dependent. This classical interpretation of quantum mechanics was created by Niels Bohr and Werner Heisenberg and their colleagues in the late 1920s. It is called the Copenhagen Interpretation because Bohr and Heisenberg taught at the University of Copenhagen. According to many of its advocates, it has implications about time reversibility, determinism, the conservation of information, locality, and realism’s promotion of the reality of the world independently of its being observed—namely, that they all fail. The creators of the Copenhagen Interpretation such as Born, Bohr, Heisenberg, von Neumann, Pauli, and Wigner did not agree with each other about what is really going on in a world accurately described by quantum mechanics, but this historical dispute is not described below in any detail.

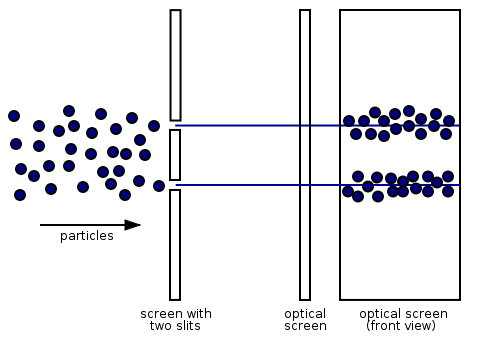

Let’s consider how a simple experiment might reveal why we philosophers and physicists should understand the world in this new way. Thomas Young’s double-slit experiment of 1801 convinced physicists to believe that light is a wave (as Christiaan Huygens had speculated in the 17th century) and not, as Isaac Newton believed, a beam of particles. In the famous quantum version of Young’s double-slit experiment, electrons all having the same energy and being what we now call “coherent” are repeatedly shot toward and through two adjacent, parallel slits in an otherwise impenetrable metal plate. Here is a simplistic diagram of the experimental set up, giving an aerial view of the electrons (black dots) with straight lines showing the approximate paths taken through the slits and onto the optical screen behind the plate, plus a second view of the optical screen as seen face on rather than from above:

The diagram is a bird’s eye view of electrons passing through two slits and then hitting an optical screen that is behind the screen (plate) with the two slits. The optical screen is shown twice, first on the right in an aerial view and then farther to the right in a full frontal view as if it were being viewed from the two slits. The full frontal view shows two jumbled rows on the right where the electrons have collided with the optical screen. The optical screen that displays the dots behind the plate is similar to a computer monitor that displays a pixel-dot when and where an electron collides with it. Think of it as a position measuring device. Bullets, pellets, and sand grains would produce a similar pattern.

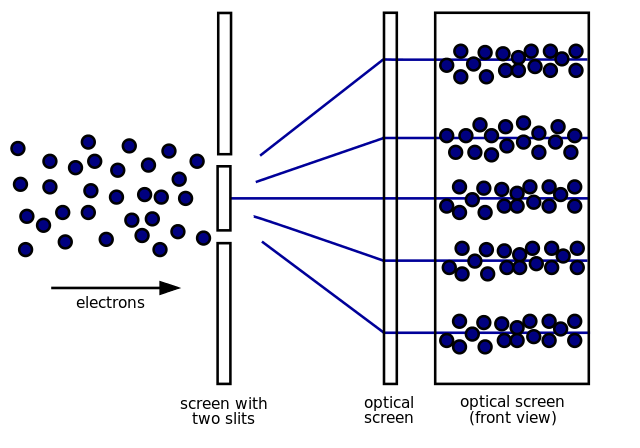

What is especially interesting is that the electrons behave differently if someone observes which slits they passed through. When observed, the electrons create the pattern shown above, but when not observed they leave the pattern shown below:

When unobserved, the impacts build up over time into a pattern of many alternating dark and bright bands on the screen. This pattern is very similar to the pattern obtained by diffraction of light waves or water waves. This suggests the electron is behaving like a wave that went through both slits at once. The incoming wave exits the slits as two waves that interfere either constructively or destructively. When one wave’s trough interferes with another wave’s peak at the screen, no dot is produced. When two crests meet at the screen, there is constructive interference, and the result is a dot. There are multiple, parallel stripes of dots produced along the screen, but only five are shown in the diagram. Stripes farther from the center of the screen are dimmer. Waves have no problem going through two or more slits simultaneously, but classical particles cannot behave this way. Because the collective electron behavior over time looks so much like optical wave diffraction, this is considered to be definitive evidence of electrons behaving as waves. The same pattern of results occurs if other particles are used in place of electrons. The experiment is performed in a vacuum so there are no significant interactions between the incoming particles and any air molecules.

Another remarkable feature of this experiment is that the pattern of interference is produced even when the electrons are shot one at a time at the plate several seconds apart. One is tempted to ask: Does an electron know what its earlier fellow electrons did so it can act to produce the proper pattern on the screen?

Philosophers of physics do not agree on what is really going on here. A minority view, sometimes called “quantum Bayesianism,” says quantum theory does not tell you what is happening in nature but only what you can know about nature. This position rejects the standard philosophical position that science describes or represents the physical world.

A less extreme and now highly favored explanation of the double-slit experiment says what is happening is that we are seeing evidence of “wave-particle duality,” namely that a single electron itself has both wave and particle properties, as does other matter. When an electron is unobserved, it is a wave that is an extended object that is located in many places at once, but when it is observed it is an unextended particle having a single, specific location. This mix of two apparently incompatible properties (wave properties and particle properties) is called a “duality,” and the electron is said to behave as a “wavicle.”

The psychologist Jerome Bruner reported that Bohr discovered his complementary principle when, after his son was caught stealing a pipe and he confessed, Bohr realized the impossibility of simultaneously loving his son and wanting justice for the pipe owner. Brooding upon that problem, he thought of the gestalt switch required as one sees a black vase and then sees instead a pair of white human faces in the famous figure-ground trick graphic below.

Influenced by the graphic, “the impossibility of thinking simultaneously about the position and the velocity of a particle occurred to him.” Generalizing upon this impossibility, Bohr produced his principle of complementarity–that some experiments can be designed to reveal the wave nature of reality and some experiments can be designed to reveal the particle nature of reality, but no experiment can do both simultaneously.

Experiments in 1979 first showed that wave–particle duality allows a wavicle to have different ratios of being a particle to being a wave, depending on the situation.

In the first half of the twentieth century, influenced by Logical Positivism which was then dominant in analytic philosophy, some advocates of the Copenhagen interpretation said quantum mechanics shows that our belief that there is something a physical system is doing when it is not being observed is meaningless. In other words, a fully third-person perspective on nature is impossible. In particular, in order to explain the double-slit experiment, Niels Bohr adopted an anti-realist stance by saying there is no determinate, unfuzzy way the world is when it is not being observed. There is only a cloud of possible values for each property of the system that might be measured. So, there is no place where the electron is when it is not being observed; there are just electron “clouds.” Bohr claimed he was a realist, but historians agree that he was not.

This anti-realist position prompted some philosophers to ask for more clarity about which beings should be said to count as being conscious and which do not. An opponent of anti-realism, Albert Einstein, asked a supporter of the Copenhagen Interpretation whether he really believed that the moon exists only when it is being looked at.

d. Superposition and Schrödinger’s Cat

According to most interpretations of quantum theory, the future is a superposition of possibilities. In the two-slit experiment described above, the Copenhagen Interpretation implies that before a measurement is made the experiment’s comprehensive state is a simultaneous superposition or “sum” of two different comprehensive states, one in which the electron goes through the left slit and one in which it goes through the right slit. This is not at all like a tree having a state of being tall and of being green. Those are not comprehensive states of a physical system.